|

AWS Certified Data Analytics - Specialty certification questions and exam summary helps you to get focused on the exam. This guide also helps you to be on DAS-C01 exam track to get certified with good score in the final exam. AWS (DAS-C01) Certification Summary

AWS (DAS-C01) Data Analytics Specialty Certification Exam Syllabus 01. Collection - 18% Determine the operational characteristics of the collection system - Evaluate that the data loss is within tolerance limits in the event of failures - Evaluate costs associated with data acquisition, transfer, and provisioning from various sources into the collection system (e.g., networking, bandwidth, ETL/data migration costs) - Assess the failure scenarios that the collection system may undergo, and take remediation actions based on impact - Determine data persistence at various points of data capture - Identify the latency characteristics of the collection system Select a collection system that handles the frequency, volume, and the source of data - Describe and characterize the volume and flow characteristics of incoming data (streaming, transactional, batch) - Match flow characteristics of data to potential solutions - Assess the tradeoffs between various ingestion services taking into account scalability, cost, fault tolerance, latency, etc. - Explain the throughput capability of a variety of different types of data collection and identify bottlenecks - Choose a collection solution that satisfies connectivity constraints of the source data system Select a collection system that addresses the key properties of data, such as order, format, and compression - Describe how to capture data changes at the source - Discuss data structure and format, compression applied, and encryption requirements - Distinguish the impact of out-of-order delivery of data, duplicate delivery of data, and the tradeoffs between at-most-once, exactly-once, and at-least-once processing - Describe how to transform and filter data during the collection process 02. Storage and Data Management - 22% Determine the operational characteristics of the storage solution for analytics - Determine the appropriate storage service(s) on the basis of cost vs. performance - Understand the durability, reliability, and latency characteristics of the storage solution based on requirements - Determine the requirements of a system for strong vs. eventual consistency of the storage system - Determine the appropriate storage solution to address data freshness requirements Determine data access and retrieval patterns - Determine the appropriate storage solution based on update patterns (e.g., bulk, transactional, micro batching) - Determine the appropriate storage solution based on access patterns (e.g., sequential vs. random access, continuous usage vs.ad hoc) - Determine the appropriate storage solution to address change characteristics of data (appendonly changes vs. updates) - Determine the appropriate storage solution for long-term storage vs. transient storage - Determine the appropriate storage solution for structured vs. semi-structured data - Determine the appropriate storage solution to address query latency requirements Select appropriate data layout, schema, structure, and format - Determine appropriate mechanisms to address schema evolution requirements - Select the storage format for the task - Select the compression/encoding strategies for the chosen storage format - Select the data sorting and distribution strategies and the storage layout for efficient data access - Explain the cost and performance implications of different data distributions, layouts, and formats (e.g., size and number of files) - Implement data formatting and partitioning schemes for data-optimized analysis Define data lifecycle based on usage patterns and business requirements - Determine the strategy to address data lifecycle requirements - Apply the lifecycle and data retention policies to different storage solutions Determine the appropriate system for cataloging data and managing metadata - Evaluate mechanisms for discovery of new and updated data sources - Evaluate mechanisms for creating and updating data catalogs and metadata - Explain mechanisms for searching and retrieving data catalogs and metadata - Explain mechanisms for tagging and classifying data 03. Processing - 24% Determine appropriate data processing solution requirements - Understand data preparation and usage requirements - Understand different types of data sources and targets - Evaluate performance and orchestration needs - Evaluate appropriate services for cost, scalability, and availability Design a solution for transforming and preparing data for analysis - Apply appropriate ETL/ELT techniques for batch and real-time workloads - Implement failover, scaling, and replication mechanisms - Implement techniques to address concurrency needs - Implement techniques to improve cost-optimization efficiencies - Apply orchestration workflows - Aggregate and enrich data for downstream consumption Automate and operationalize data processing solutions - Implement automated techniques for repeatable workflows - Apply methods to identify and recover from processing failures - Deploy logging and monitoring solutions to enable auditing and traceability 04. Analysis and Visualization - 18% Determine the operational characteristics of the analysis and visualization solution - Determine costs associated with analysis and visualization - Determine scalability associated with analysis - Determine failover recovery and fault tolerance within the RPO/RTO - Determine the availability characteristics of an analysis tool - Evaluate dynamic, interactive, and static presentations of data - Translate performance requirements to an appropriate visualization approach (pre-compute and consume static data vs. consume dynamic data) Select the appropriate data analysis solution for a given scenario - Evaluate and compare analysis solutions - Select the right type of analysis based on the customer use case (streaming, interactive, collaborative, operational) Select the appropriate data visualization solution for a given scenario - Evaluate output capabilities for a given analysis solution (metrics, KPIs, tabular, API) - Choose the appropriate method for data delivery (e.g., web, mobile, email, collaborative notebooks) - Choose and define the appropriate data refresh schedule - Choose appropriate tools for different data freshness requirements (e.g., Amazon Elasticsearch Service vs. Amazon QuickSight vs. Amazon EMR notebooks) - Understand the capabilities of visualization tools for interactive use cases (e.g., drill down, drill through and pivot) - Implement the appropriate data access mechanism (e.g., in memory vs. direct access) - Implement an integrated solution from multiple heterogeneous data sources 05. Security - 18% Select appropriate authentication and authorization mechanisms - Implement appropriate authentication methods (e.g., federated access, SSO, IAM) - Implement appropriate authorization methods (e.g., policies, ACL, table/column level permissions) - Implement appropriate access control mechanisms (e.g., security groups, role-based control) Apply data protection and encryption techniques - Determine data encryption and masking needs - Apply different encryption approaches (server-side encryption, client-side encryption, AWS KMS, AWS CloudHSM) - Implement at-rest and in-transit encryption mechanisms - Implement data obfuscation and masking techniques - Apply basic principles of key rotation and secrets management Apply data governance and compliance controls - Determine data governance and compliance requirements - Understand and configure access and audit logging across data analytics services - Implement appropriate controls to meet compliance requirements AWS Data Analytics Specialty (DAS-C01) Certification Questions 01. A financial company uses Amazon EMR for its analytics workloads. During the company’s annual security audit, the security team determined that none of the EMR clusters’ root volumes are encrypted. The security team recommends the company encrypt its EMR clusters’ root volume as soon as possible. Which solution would meet these requirements? a) Enable at-rest encryption for EMR File System (EMRFS) data in Amazon S3 in a security configuration. Re-create the cluster using the newly created security configuration. b) Specify local disk encryption in a security configuration. Re-create the cluster using the newly created security configuration. c) Detach the Amazon EBS volumes from the master node. Encrypt the EBS volume and attach it back to the master node. d) Re-create the EMR cluster with LZO encryption enabled on all volumes. 02. A publisher website captures user activity and sends clickstream data to Amazon Kinesis Data Streams. The publisher wants to design a cost-effective solution to process the data to create a timeline of user activity within a session. The solution must be able to scale depending on the number of active sessions. Which solution meets these requirements? a) Include a variable in the clickstream data from the publisher website to maintain a counter for the number of active user sessions. Use a timestamp for the partition key for the stream. Configure the consumer application to read the data from the stream and change the number of processor threads based upon the counter. Deploy the consumer application on Amazon EC2 instances in an EC2 Auto Scaling group. b) Include a variable in the clickstream to maintain a counter for each user action during their session. Use the action type as the partition key for the stream. Use the Kinesis Client Library (KCL) in the consumer application to retrieve the data from the stream and perform the processing. Configure the consumer application to read the data from the stream and change the number of processor threads based upon the counter. Deploy the consumer application on AWS Lambda. c) Include a session identifier in the clickstream data from the publisher website and use as the partition key for the stream. Use the Kinesis Client Library (KCL) in the consumer application to retrieve the data from the stream and perform the processing. Deploy the consumer application on Amazon EC2 instances in an EC2 Auto Scaling group. Use an AWS Lambda function to reshard the stream based upon Amazon CloudWatch alarms. d) Include a variable in the clickstream data from the publisher website to maintain a counter for the number of active user sessions. Use a timestamp for the partition key for the stream. Configure the consumer application to read the data from the stream and change the number of processor threads based upon the counter. Deploy the consumer application on AWS Lambda. 03. An online retail company wants to perform analytics on data in large Amazon S3 objects using Amazon EMR. An Apache Spark job repeatedly queries the same data to populate an analytics dashboard. The analytics team wants to minimize the time to load the data and create the dashboard. Which approaches could improve the performance? (Select TWO.) a) Copy the source data into Amazon Redshift and rewrite the Apache Spark code to create analytical reports by querying Amazon Redshift. b) Copy the source data from Amazon S3 into Hadoop Distributed File System (HDFS) using s3distcp. c) Load the data into Spark DataFrames. d) Stream the data into Amazon Kinesis and use the Kinesis Connector Library (KCL) in multiple Spark jobs to perform analytical jobs. e) Use Amazon S3 Select to retrieve the data necessary for the dashboards from the S3 objects. 04. A company ingests a large set of clickstream data in nested JSON format from different sources and stores it in Amazon S3. Data analysts need to analyze this data in combination with data stored in an Amazon Redshift cluster. Data analysts want to build a cost-effective and automated solution for this need. Which solution meets these requirements? a) Use Apache Spark SQL on Amazon EMR to convert the clickstream data to a tabular format. Use the Amazon Redshift COPY command to load the data into the Amazon Redshift cluster. b) Use AWS Lambda to convert the data to a tabular format and write it to Amazon S3. Use the Amazon Redshift COPY command to load the data into the Amazon Redshift cluster. c) Use the Relationalize class in an AWS Glue ETL job to transform the data and write the data back to Amazon S3. Use Amazon Redshift Spectrum to create external tables and join with the internal tables. d) Use the Amazon Redshift COPY command to move the clickstream data directly into new tables in the Amazon Redshift cluster. 05. A media company is migrating its on-premises legacy Hadoop cluster with its associated data processing scripts and workflow to an Amazon EMR environment running the latest Hadoop release. The developers want to reuse the Java code that was written for data processing jobs for the on-premises cluster. Which approach meets these requirements? a) Deploy the existing Oracle Java Archive as a custom bootstrap action and run the job on the EMR cluster. b) Compile the Java program for the desired Hadoop version and run it using a CUSTOM_JAR step on the EMR cluster. c) Submit the Java program as an Apache Hive or Apache Spark step for the EMR cluster. d) Use SSH to connect the master node of the EMR cluster and submit the Java program using the AWS CLI. 06. A company needs to implement a near-real-time fraud prevention feature for its ecommerce site. User and order details need to be delivered to an Amazon SageMaker endpoint to flag suspected fraud. The amount of input data needed for the inference could be as much as 1.5 MB. Which solution meets the requirements with the LOWEST overall latency? a) Create an Amazon Managed Streaming for Kafka cluster and ingest the data for each order into a topic. Use a Kafka consumer running on Amazon EC2 instances to read these messages and invoke the Amazon SageMaker endpoint. b) Create an Amazon Kinesis Data Streams stream and ingest the data for each order into the stream. Create an AWS Lambda function to read these messages and invoke the Amazon SageMaker endpoint. c) Create an Amazon Kinesis Data Firehose delivery stream and ingest the data for each order into the stream. Configure Kinesis Data Firehose to deliver the data to an Amazon S3 bucket. Trigger an AWS Lambda function with an S3 event notification to read the data and invoke the Amazon SageMaker endpoint. d) Create an Amazon SNS topic and publish the data for each order to the topic. Subscribe the Amazon SageMaker endpoint to the SNS topic. 07. A company is providing analytics services to its marketing and human resources (HR) departments. The departments can only access the data through their business intelligence (BI) tools, which run Presto queries on an Amazon EMR cluster that uses the EMR File System (EMRFS). The marketing data analyst must be granted access to the advertising table only. The HR data analyst must be granted access to the personnel table only. Which approach will satisfy these requirements? a) Create separate IAM roles for the marketing and HR users. Assign the roles with AWS Glue resourcebased policies to access their corresponding tables in the AWS Glue Data Catalog. Configure Presto to use the AWS Glue Data Catalog as the Apache Hive metastore. b) Create the marketing and HR users in Apache Ranger. Create separate policies that allow access to the user's corresponding table only. Configure Presto to use Apache Ranger and an external Apache Hive metastore running in Amazon RDS. c) Create separate IAM roles for the marketing and HR users. Configure EMR to use IAM roles for EMRFS access. Create a separate bucket for the HR and marketing data. Assign appropriate permissions so the users will only see their corresponding datasets. d) Create the marketing and HR users in Apache Ranger. Create separate policies that allows access to the user's corresponding table only. Configure Presto to use Apache Ranger and the AWS Glue Data Catalog as the Apache Hive metastore. 08. A company is currently using Amazon DynamoDB as the database for a user support application. The company is developing a new version of the application that will store a PDF file for each support case ranging in size from 1–10 MB. The file should be retrievable whenever the case is accessed in the application. How can the company store the file in the MOST cost-effective manner? a) Store the file in Amazon DocumentDB and the document ID as an attribute in the DynamoDB table. b) Store the file in Amazon S3 and the object key as an attribute in the DynamoDB table. c) Split the file into smaller parts and store the parts as multiple items in a separate DynamoDB table. d) Store the file as an attribute in the DynamoDB table using Base64 encoding. 09. A data engineer needs to create a dashboard to display social media trends during the last hour of a large company event. The dashboard needs to display the associated metrics with a consistent latency of less than 2 minutes. Which solution meets these requirements? a) Publish the raw social media data to an Amazon Kinesis Data Firehose delivery stream. Use Kinesis Data Analytics for SQL Applications to perform a sliding window analysis to compute the metrics and output the results to a Kinesis Data Streams data stream. Configure an AWS Lambda function to save the stream data to an Amazon DynamoDB table. Deploy a real-time dashboard hosted in an Amazon S3 bucket to read and display the metrics data stored in the DynamoDB table. b) Publish the raw social media data to an Amazon Kinesis Data Firehose delivery stream. Configure the stream to deliver the data to an Amazon Elasticsearch Service cluster with a buffer interval of 0 seconds. Use Kibana to perform the analysis and display the results. c) Publish the raw social media data to an Amazon Kinesis Data Streams data stream. Configure an AWS Lambda function to compute the metrics on the stream data and save the results in an Amazon S3 bucket. Configure a dashboard in Amazon QuickSight to query the data using Amazon Athena and display the results. d) Publish the raw social media data to an Amazon SNS topic. Subscribe an Amazon SQS queue to the topic. Configure Amazon EC2 instances as workers to poll the queue, compute the metrics, and save the results to an Amazon Aurora MySQL database. Configure a dashboard in Amazon QuickSight to query the data in Aurora and display the results. 10. A real estate company is receiving new property listing data from its agents through .csv files every day and storing these files in Amazon S3. The data analytics team created an Amazon QuickSight visualization report that uses a dataset imported from the S3 files. The data analytics team wants the visualization report to reflect the current data up to the previous day. How can a data analyst meet these requirements? a) Schedule an AWS Lambda function to drop and re-create the dataset daily. b) Configure the visualization to query the data in Amazon S3 directly without loading the data into SPICE. c) Schedule the dataset to refresh daily. d) Close and open the Amazon QuickSight visualization. Answers: Question: 01: Answer: b Question: 02: Answer: c Question: 03: Answer: c, e Question: 04: Answer: c Question: 05: Answer: b Question: 06: Answer: a Question: 07: Answer: a Question: 08: Answer: b Question: 09: Answer: a Question: 10: Answer: c How to Register for Data Analytics Specialty Certification Exam? ● Visit site for Register Data Analytics Specialty Certification Exam.

0 Comments

AWS Developer Associate certification questions and exam summary helps you to get focused on the exam. This guide also helps you to be on DVA-C01 exam track to get certified with good score in the final exam. AWS (DVA-C01) Certification Summary

AWS (DVA-C01) AWS-CDA Certification Exam Syllabus 01. Deployment - 22% Deploy written code in AWS using existing CI/CD pipelines, processes, and patterns. - Commit code to a repository and invoke build, test and/or deployment actions - Use labels and branches for version and release management - Use AWS CodePipeline to orchestrate workflows against different environments - Apply AWS CodeCommit, AWS CodeBuild, AWS CodePipeline, AWS CodeStar, and AWS CodeDeploy for CI/CD purposes - Perform a roll back plan based on application deployment policy Deploy applications using AWS Elastic Beanstalk. - Utilize existing supported environments to define a new application stack - Package the application - Introduce a new application version into the Elastic Beanstalk environment - Utilize a deployment policy to deploy an application version (i.e., all at once, rolling, rolling with batch, immutable) - Validate application health using Elastic Beanstalk dashboard - Use Amazon CloudWatch Logs to instrument application logging Prepare the application deployment package to be deployed to AWS. - Manage the dependencies of the code module (like environment variables, config files and static image files) within the package - Outline the package/container directory structure and organize files appropriately - Translate application resource requirements to AWS infrastructure parameters (e.g., memory, cores) Deploy serverless applications. - Given a use case, implement and launch an AWS Serverless Application Model (AWS SAM) template - Manage environments in individual AWS services (e.g., Differentiate between Development, Test, and Production in Amazon API Gateway) 02. Security - 26% Make authenticated calls to AWS services. - Communicate required policy based on least privileges required by application. - Assume an IAM role to access a service - Use the software development kit (SDK) credential provider on-premises or in the cloud to access AWS services (local credentials vs. instance roles) Implement encryption using AWS services. - Encrypt data at rest (client side; server side; envelope encryption) using AWS services - Encrypt data in transit Implement application authentication and authorization. - Add user sign-up and sign-in functionality for applications with Amazon Cognito identity or user pools - Use Amazon Cognito-provided credentials to write code that access AWS services. - Use Amazon Cognito sync to synchronize user profiles and data - Use developer-authenticated identities to interact between end user devices, backend authentication, and Amazon Cognito 03. Development with AWS Services - 30% Write code for serverless applications. - Compare and contrast server-based vs. serverless model (e.g., micro services, stateless nature of serverless applications, scaling serverless applications, and decoupling layers of serverless applications) - Configure AWS Lambda functions by defining environment variables and parameters (e.g., memory, time out, runtime, handler) - Create an API endpoint using Amazon API Gateway - Create and test appropriate API actions like GET, POST using the API endpoint - Apply Amazon DynamoDB concepts (e.g., tables, items, and attributes) - Compute read/write capacity units for Amazon DynamoDB based on application requirements - Associate an AWS Lambda function with an AWS event source (e.g., Amazon API Gateway, Amazon CloudWatch event, Amazon S3 events, Amazon Kinesis) - Invoke an AWS Lambda function synchronously and asynchronously Translate functional requirements into application design. - Determine real-time vs. batch processing for a given use case - Determine use of synchronous vs. asynchronous for a given use case - Determine use of event vs. schedule/poll for a given use case - Account for tradeoffs for consistency models in an application design Implement application design into application code. - Write code to utilize messaging services (e.g., SQS, SNS) - Use Amazon ElastiCache to create a database cache - Use Amazon DynamoDB to index objects in Amazon S3 - Write a stateless AWS Lambda function - Write a web application with stateless web servers (Externalize state) Write code that interacts with AWS services by using APIs, SDKs, and AWS CLI. - Choose the appropriate APIs, software development kits (SDKs), and CLI commands for the code components - Write resilient code that deals with failures or exceptions (i.e., retries with exponential back off and jitter) 04. Refactoring - 10% Optimize applications to best use AWS services and features. - Implement AWS caching services to optimize performance (e.g., Amazon ElastiCache, Amazon API Gateway cache) - Apply an Amazon S3 naming scheme for optimal read performance Migrate existing application code to run on AWS. - Isolate dependencies - Run the application as one or more stateless processes - Develop in order to enable horizontal scalability - Externalize state 05. Monitoring and Troubleshooting - 12% Write code that can be monitored. - Create custom Amazon CloudWatch metrics - Perform logging in a manner available to systems operators - Instrument application source code to enable tracing in AWS X-Ray Perform root cause analysis on faults found in testing or production. - Interpret the outputs from the logging mechanism in AWS to identify errors in logs - Check build and testing history in AWS services (e.g., AWS CodeBuild, AWS CodeDeploy, AWS CodePipeline) to identify issues - Utilize AWS services (e.g., Amazon CloudWatch, VPC Flow Logs, and AWS X-Ray) to locate a specific faulty component AWS-CDA (DVA-C01) Certification Questions 01. A developer is testing an application locally and has deployed it to AWS Lambda. To remain under the package size limit, the dependencies were not included in the deployment file. When testing the application remotely, the function does not execute because of missing dependencies. Which approach would resolve the issue? a) Use the Lambda console editor to update the code and include the missing dependencies. b) Create an additional .zip file with the missing dependencies and include the file in the original Lambda deployment package. c) Add references to the missing dependencies in the Lambda function's environment variables. d) Attach a layer to the Lambda function that contains the missing dependencies. 02. A developer is designing a web application that allows the users to post comments and receive near real-time feedback. Which architectures meet these requirements? (Select TWO.) a) Create an AWS AppSync schema and corresponding APIs. Use an Amazon DynamoDB table as the data store. b) Create a WebSocket API in Amazon API Gateway. Use an AWS Lambda function as the backend and an Amazon DynamoDB table as the data store. c) Create an AWS Elastic Beanstalk application backed by an Amazon RDS database. Configure the application to allow long-lived TCP/IP sockets. d) Create a GraphQL endpoint in Amazon API Gateway. Use an Amazon DynamoDB table as the data store. e) Enable WebSocket on Amazon CloudFront. Use an AWS Lambda function as the origin and an Amazon Aurora DB cluster as the data store. 03. A company has AWS workloads in multiple geographical locations. A developer has created an Amazon Aurora database in the us-west-1 Region. The database is encrypted using a customer-managed AWS KMS key. Now the developer wants to create the same encrypted database in the us-east-1 Region. Which approach should the developer take to accomplish this task? a) Create a snapshot of the database in the us-west-1 Region. Copy the snapshot to the us-east-1 Region and specify a KMS key in the us-east-1 Region. Restore the database from the copied snapshot. b) Create an unencrypted snapshot of the database in the us-west-1 Region. Copy the snapshot to the useast-1 Region. Restore the database from the copied snapshot and enable encryption using the KMS key from the us-east-1 Region. c) Disable encryption on the database. Create a snapshot of the database in the us-west-1 Region. Copy the snapshot to the us-east-1 Region. Restore the database from the copied snapshot. d) In the us-east-1 Region, choose to restore the latest automated backup of the database from the us-west1 Region. Enable encryption using a KMS key in the us-east-1 Region. 04. A company is using Amazon API Gateway for its REST APIs in an AWS account. The security team wants to allow only IAM users from another AWS account to access the APIs. Which combination of actions should the security team take to satisfy these requirements? (Select TWO.) a) Create an IAM permission policy and attach it to each IAM user. Set the APIs method authorization type to AWS_IAM. Use Signature Version 4 to sign the API requests. b) Create an Amazon Cognito user pool and add each IAM user to the pool. Set the method authorization type for the APIs to COGNITO_USER_POOLS. Authenticate using the IAM credentials in Amazon Cognito and add the ID token to the request headers. c) Create an Amazon Cognito identity pool and add each IAM user to the pool. Set the method authorization type for the APIs to COGNITO_USER_POOLS. Authenticate using the IAM credentials in Amazon Cognito and add the access token to the request headers. d) Create a resource policy for the APIs that allows access for each IAM user only. e) Create an Amazon Cognito authorizer for the APIs that allows access for each IAM user only. Set the method authorization type for the APIs to COGNITO_USER_POOLS. 05. A developer is building an application that transforms text files to .pdf files. The text files are written to a source Amazon S3 bucket by a separate application. The developer wants to read the files as they arrive in Amazon S3 and convert them to .pdf files using AWS Lambda. The developer has written an IAM policy to allow access to Amazon S3 and Amazon CloudWatch Logs. Which actions should the developer take to ensure that the Lambda function has the correct permissions? a) Create a Lambda execution role using AWS IAM. Attach the IAM policy to the role. Assign the Lambda execution role to the Lambda function. b) Create a Lambda execution user using AWS IAM. Attach the IAM policy to the user. Assign the Lambda execution user to the Lambda function. c) Create a Lambda execution role using AWS IAM. Attach the IAM policy to the role. Store the IAM role as an environment variable in the Lambda function. d) Create a Lambda execution user using AWS IAM. Attach the IAM policy to the user. Store the IAM user credentials as environment variables in the Lambda function. 06. A developer is adding sign-up and sign-in functionality to an application. The application is required to make an API call to a custom analytics solution to log user sign-in events. Which combination of actions should the developer take to satisfy these requirements? (Select TWO.) a) Use Amazon Cognito to provide the sign-up and sign-in functionality. b) Use AWS IAM to provide the sign-up and sign-in functionality. c) Configure an AWS Config rule to make the API call triggered by the post-authentication event. d) Invoke an Amazon API Gateway method to make the API call triggered by the post-authentication event. e) Execute an AWS Lambda function to make the API call triggered by the post-authentication event. 07. A developer is adding Amazon ElastiCache for Memcached to a company's existing record storage application to reduce the load on the database and increase performance. The developer has decided to use lazy loading based on an analysis of common record handling patterns. Which pseudocode example would correctly implement lazy loading? a) record_value = db.query("UPDATE Records SET Details = {1} WHERE ID == {0}", record_key, record_value) cache.set (record_key, record_value) b) record_value = cache.get(record_key) if (record_value == NULL) record_value = db.query("SELECT Details FROM Records WHERE ID == {0}", record_key) cache.set (record_key, record_value) c) record_value = cache.get (record_key) db.query("UPDATE Records SET Details = {1} WHERE ID == {0}", record_key, record_value) d) record_value = db.query("SELECT Details FROM Records WHERE ID == {0}", record_key) if (record_value != NULL) cache.set (record_key, record_value) 08. A company is migrating a legacy application to Amazon EC2. The application uses a user name and password stored in the source code to connect to a MySQL database. The database will be migrated to an Amazon RDS for MySQL DB instance. As part of the migration, the company wants to implement a secure way to store and automatically rotate the database credentials. Which approach meets these requirements? a) Store the database credentials in environment variables in an Amazon Machine Image (AMI). Rotate the credentials by replacing the AMI. b) Store the database credentials in AWS Systems Manager Parameter Store. Configure Parameter Store to automatically rotate the credentials. c) Store the database credentials in environment variables on the EC2 instances. Rotate the credentials by relaunching the EC2 instances. d) Store the database credentials in AWS Secrets Manager. Configure Secrets Manager to automatically rotate the credentials. 09. A developer is building a web application that uses Amazon API Gateway. The developer wants to maintain different environments for development and production (dev and prod) workloads. The API will be backed by an AWS Lambda function with two aliases: one for dev and one for prod. How can this be achieved with the LEAST amount of configuration? a) Create a REST API for each environment and integrate the APIs with the corresponding dev and prod aliases of the Lambda function. Then deploy the two APIs to their respective stages and access them using the stage URLs. b) Create one REST API and integrate it with the Lambda function using a stage variable in place of an alias. Then deploy the API to two different stages – dev and prod – and create a stage variable in each stage with different aliases as the values. Access the API using the different stage URLs. c) Create one REST API and integrate it with the dev alias of the Lambda function, and deploy it to a dev environment. Configure a canary release deployment for prod where the canary will integrate with the Lambda prod alias. d) Create one REST API and integrate it with the prod alias of the Lambda function and deploy it to a prod environment. Configure a canary release deployment for dev where the canary will integrate with the Lambda dev alias. 10. A developer wants to track the performance of an application that runs on a fleet of Amazon EC2 instances. The developer wants to view and track statistics across the fleet, such as the average and maximum request latency. The developer would like to be notified immediately if the average response time exceeds a threshold. Which solution meets these requirements? a) Configure a cron job on each instance to measure the response time and update a log file stored in an Amazon S3 bucket every minute. Use an Amazon S3 event notification to trigger an AWS Lambda function that reads the log file and writes new entries to an Amazon Elasticsearch Service (Amazon ES) cluster. Visualize the results in a Kibana dashboard. Configure Amazon ES to send an alert to an Amazon SNS topic when the response time exceeds a threshold. b) Configure the application to write the response times to the system log. Install and configure the Amazon Inspector agent to continually read the logs and send the response times to Amazon EventBridge. View the metrics graphs in the EventBridge console. Configure an EventBridge custom rule to send an Amazon SNS notification when the average of the response time metric exceeds the threshold. c) Configure the application to write the response times to a log file. Install and configure the Amazon CloudWatch agent on the instances to stream the application log to CloudWatch Logs. Create a metric filter of the response time from the log. View the metrics graphs in the CloudWatch console. Create a CloudWatch alarm to send an Amazon SNS notification when the average of the response time metric exceeds the threshold. d) Install and configure the AWS Systems Manager Agent on the instances to monitor the response time and send it to Amazon CloudWatch as a custom metric. View the metrics graphs in Amazon QuickSight. Create a CloudWatch alarm to send an Amazon SNS notification when the average of the response time metric exceeds the threshold. Answers: Question: 01: Answer: d Question: 02: Answer: a, b Question: 03: Answer: a Question: 04: Answer: a, d Question: 05: Answer: a Question: 06: Answer: a, e Question: 07: Answer: b Question: 08: Answer: d Question: 09: Answer: b Question: 10: Answer: c How to Register for AWS-CDA Certification Exam? ● Visit site for Register AWS-CDA Certification Exam. AWS Certified Security - Specialty certification questions and exam summary helps you to get focused on the exam. This guide also helps you to be on SCS-C01 exam track to get certified with good score in the final exam. AWS (SCS-C01) Certification Summary

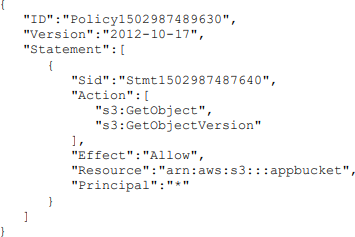

AWS (SCS-C01) Security Specialty Certification Exam Syllabus 01. Incident Response - 12% Given an AWS abuse notice, evaluate the suspected compromised instance or exposed access keys. - Given an AWS Abuse report about an EC2 instance, securely isolate the instance as part of a forensic investigation. - Analyze logs relevant to a reported instance to verify a breach, and collect relevant data. - Capture a memory dump from a suspected instance for later deep analysis or for legal compliance reasons. Verify that the Incident Response plan includes relevant AWS services. - Determine if changes to baseline security configuration have been made. - Determine if list omits services, processes, or procedures which facilitate Incident Response. - Recommend services, processes, procedures to remediate gaps. Evaluate the configuration of automated alerting, and execute possible remediation of security related incidents and emerging issues. - Automate evaluation of conformance with rules for new/changed/removed resources. - Apply rule-based alerts for common infrastructure misconfigurations. - Review previous security incidents and recommend improvements to existing systems. 02. Logging and Monitoring - 20% Design and implement security monitoring and alerting. - Analyze architecture and identify monitoring requirements and sources for monitoring statistics. - Analyze architecture to determine which AWS services can be used to automate monitoring and alerting. - Analyze the requirements for custom application monitoring, and determine how this could be achieved. - Set up automated tools/scripts to perform regular audits. Troubleshoot security monitoring and alerting. - Given an occurrence of a known event without the expected alerting, analyze the service functionality and configuration and remediate. - Given an occurrence of a known event without the expected alerting, analyze the permissions and remediate. - Given a custom application which is not reporting its statistics, analyze the configuration and remediate. - Review audit trails of system and user activity. Design and implement a logging solution. - Analyze architecture and identify logging requirements and sources for log ingestion. - Analyze requirements and implement durable and secure log storage according to AWS best practices. - Analyze architecture to determine which AWS services can be used to automate log ingestion and analysis. Troubleshoot logging solutions. - Given the absence of logs, determine the incorrect configuration and define remediation steps. - Analyze logging access permissions to determine incorrect configuration and define remediation steps. - Based on the security policy requirements, determine the correct log level, type, and sources. 03. Infrastructure Security - 26% Design edge security on AWS. - For a given workload, assess and limit the attack surface. - Reduce blast radius (e.g. by distributing applications across accounts and regions). - Choose appropriate AWS and/or third-party edge services such as WAF, CloudFront and Route 53 to protect against DDoS or filter application-level attacks. - Given a set of edge protection requirements for an application, evaluate the mechanisms to prevent and detect intrusions for compliance and recommend required changes. - Test WAF rules to ensure they block malicious traffic. Design and implement a secure network infrastructure. - Disable any unnecessary network ports and protocols. - Given a set of edge protection requirements, evaluate the security groups and NACLs of an application for compliance and recommend required changes. - Given security requirements, decide on network segmentation (e.g. security groups and NACLs) that allow the minimum ingress/egress access required. - Determine the use case for VPN or Direct Connect. - Determine the use case for enabling VPC Flow Logs. - Given a description of the network infrastructure for a VPC, analyze the use of subnets and gateways for secure operation. Troubleshoot a secure network infrastructure. - Determine where network traffic flow is being denied. - Given a configuration, confirm security groups and NACLs have been implemented correctly. Design and implement host-based security. - Given security requirements, install and configure host-based protections including Inspector, SSM. - Decide when to use host-based firewall like iptables. - Recommend methods for host hardening and monitoring. 04. Identity and Access Management - 20% Design and implement a scalable authorization and authentication system to access AWS resources. - Given a description of a workload, analyze the access control configuration for AWS services and make recommendations that reduce risk. - Given a description how an organization manages their AWS accounts, verify security of their root user. - Given your organization’s compliance requirements, determine when to apply user policies and resource policies. - Within an organization’s policy, determine when to federate a directory services to IAM. - Design a scalable authorization model that includes users, groups, roles, and policies. - Identify and restrict individual users of data and AWS resources. - Review policies to establish that users/systems are restricted from performing functions beyond their responsibility, and also enforce proper separation of duties. Troubleshoot an authorization and authentication system to access AWS resources. - Investigate a user’s inability to access S3 bucket contents. - Investigate a user’s inability to switch roles to a different account. - Investigate an Amazon EC2 instance’s inability to access a given AWS resource. 05. Data Protection - 22% Design and implement key management and use. - Analyze a given scenario to determine an appropriate key management solution. - Given a set of data protection requirements, evaluate key usage and recommend required changes. - Determine and control the blast radius of a key compromise event and design a solution to contain the same. Troubleshoot key management. - Break down the difference between a KMS key grant and IAM policy. - Deduce the precedence given different conflicting policies for a given key. - Determine when and how to revoke permissions for a user or service in the event of a compromise. Design and implement a data encryption solution for data at rest and data in transit. - Given a set of data protection requirements, evaluate the security of the data at rest in a workload and recommend required changes. - Verify policy on a key such that it can only be used by specific AWS services. - Distinguish the compliance state of data through tag-based data classifications and automate remediation. - Evaluate a number of transport encryption techniques and select the appropriate method (i.e. TLS, IPsec, client-side KMS encryption). AWS Security Specialty (SCS-C01) Certification Questions 01. A company is building a data lake on Amazon S3. The data consists of millions of small files containing sensitive information. The Security team has the following requirements for the architecture: - Data must be encrypted in transit. - Data must be encrypted at rest. - The bucket must be private, but if the bucket is accidentally made public, the data must remain confidential. Which combination of steps would meet the requirements? (Select TWO.) a) Enable AES-256 encryption using server-side encryption with Amazon S3-managed encryption keys (SSE-S3) on the S3 bucket. b) Enable default encryption with server-side encryption with AWS KMS-managed keys (SSE-KMS) on the S3 bucket. c) Add a bucket policy that includes a deny if a PutObject request does not include aws:SecureTransport. d) Add a bucket policy with aws:SourceIp to allow uploads and downloads from the corporate intranet only. e) Enable Amazon Macie to monitor and act on changes to the data lake's S3 bucket. 02. A Security Engineer is working with a Product team building a web application on AWS. The application uses Amazon S3 to host the static content, Amazon API Gateway to provide RESTful services, and Amazon DynamoDB as the backend data store. The users already exist in a directory that is exposed through a SAML identity provider. Which combination of the following actions should the Engineer take to enable users to be authenticated into the web application and call APIs? (Select THREE.) a) Create a custom authorization service using AWS Lambda. b) Configure a SAML identity provider in Amazon Cognito to map attributes to the Amazon Cognito user pool attributes. c) Configure the SAML identity provider to add the Amazon Cognito user pool as a relying party. d) Configure an Amazon Cognito identity pool to integrate with social login providers. e) Update DynamoDB to store the user email addresses and passwords. f) Update API Gateway to use an Amazon Cognito user pool authorizer. 03. A Security Engineer must set up security group rules for a three-tier application: - Presentation tier – Accessed by users over the web, protected by the security group presentation-sg - Logic tier – RESTful API accessed from the presentation tier through HTTPS, protected by the security group logic-sg - Data tier – SQL Server database accessed over port 1433 from the logic tier, protected by the security group data-sg Which combination of the following security group rules will allow the application to be secure and functional? (Select THREE.) a) presentation-sg: Allow ports 80 and 443 from 0.0.0.0/0 b) data-sg: Allow port 1433 from presentation-sg c) data-sg: Allow port 1433 from logic-sg d) presentation-sg: Allow port 1433 from data-sg e) logic-sg: Allow port 443 from presentation-sg f) logic-sg: Allow port 443 from 0.0.0.0/0 04. When testing a new AWS Lambda function that retrieves items from an Amazon DynamoDB table, the Security Engineer notices that the function was not logging any data to Amazon CloudWatch Logs. The following policy was assigned to the role assumed by the Lambda function: { "Version": "2012-10-17", "Statement": [ { "Sid": "Dynamo-1234567", "Action": [ "dynamodb:GetItem" ], "Effect": "Allow", "Resource": "*" } } Which least-privilege policy addition would allow this function to log properly? a) { "Sid": "Logging-12345", "Resource": "*", "Action": [ "logs:*" ], "Effect": "Allow" } b) { "Sid": "Logging-12345", "Resource": "*", "Action": [ "logs:CreateLogStream" ], "Effect": "Allow" } c) { "Sid": "Logging-12345", "Resource": "*", "Action": [ "logs:CreateLogGroup", "logs:CreateLogStream", "logs:PutLogEvents" ], "Effect": "Allow" } d) { "Sid": "Logging-12345", "Resource": "*", "Action": [ "logs:CreateLogGroup", "logs:CreateLogStream", "logs:DeleteLogGroup", "logs:DeleteLogStream", "logs:getLogEvents", "logs:PutLogEvents" ], "Effect": "Allow" } 05. A company decides to place database hosts in its own VPC, and to set up VPC peering to different VPCs containing the application and web tiers. The application servers are unable to connect to the database. Which network troubleshooting steps should be taken to resolve the issue? (Select TWO.) a) Check to see if the application servers are in a private subnet or public subnet. b) Check the route tables for the application server subnets for routes to the VPC peering connection. c) Check the NACLs for the database subnets for rules that allow traffic from the internet. d) Check the database security groups for rules that allow traffic from the application servers. e) Check to see if the database VPC has an internet gateway 06. A Security Engineer has been informed that a user’s access key has been found on GitHub. The Engineer must ensure that this access key cannot continue to be used, and must assess whether the access key was used to perform any unauthorized activities. Which steps must be taken to perform these tasks? a) Review the user's IAM permissions and delete any unrecognized or unauthorized resources. b) Delete the user, review Amazon CloudWatch Logs in all regions, and report the abuse. c) Delete or rotate the user’s key, review the AWS CloudTrail logs in all regions, and delete any unrecognized or unauthorized resources. d) Instruct the user to remove the key from the GitHub submission, rotate keys, and re-deploy any instances that were launched. 07. An Application team is designing a solution with two applications. The Security team wants the applications' logs to be captured in two different places, because one of the applications produces logs with sensitive data. Which solution meets the requirement with the LEAST risk and effort? a) Use Amazon CloudWatch Logs to capture all logs, write an AWS Lambda function that parses the log file, and move sensitive data to a different log. b) Use Amazon CloudWatch Logs with two log groups, with one for each application, and use an AWS IAM policy to control access to the log groups, as required. c) Aggregate logs into one file, then use Amazon CloudWatch Logs, and then design two CloudWatch metric filters to filter sensitive data from the logs. d) Add logic to the application that saves sensitive data logs on the Amazon EC2 instances' local storage, and write a batch script that logs into the Amazon EC2 instances and moves sensitive logs to a secure location. 08. A corporate cloud security policy states that communication between the company's VPC and KMS must travel entirely within the AWS network and not use public service endpoints. Which combination of the following actions MOST satisfies this requirement? (Select TWO.) a) Add the aws:sourceVpce condition to the AWS KMS key policy referencing the company's VPC endpoint ID. b) Remove the VPC internet gateway from the VPC and add a virtual private gateway to the VPC to prevent direct, public internet connectivity. c) Create a VPC endpoint for AWS KMS with private DNS enabled. d) Use the KMS Import Key feature to securely transfer the AWS KMS key over a VPN. e) Add the following condition to the AWS KMS key policy: "aws:SourceIp": "10.0.0.0/16". 09. A company is hosting a web application on AWS and is using an Amazon S3 bucket to store images. Users should have the ability to read objects in the bucket. A Security Engineer has written the following bucket policy to grant public read access: Attempts to read an object, however, receive the error: "Action does not apply to any resource(s) in statement.” What should the Engineer do to fix the error?

a) Change the IAM permissions by applying PutBucketPolicy permissions. b) Verify that the policy has the same name as the bucket name. If not, make it the same. c) Change the resource section to "arn:aws:s3:::appbucket/*". d) Add an s3:ListBucket action. 10. A Security Engineer must ensure that all API calls are collected across all company accounts, and that they are preserved online and are instantly available for analysis for 90 days. For compliance reasons, this data must be restorable for 7 years. Which steps must be taken to meet the retention needs in a scalable, cost-effective way? a) Enable AWS CloudTrail logging across all accounts to a centralized Amazon S3 bucket with versioning enabled. Set a lifecycle policy to move the data to Amazon Glacier daily, and expire the data after 90 days. b) Enable AWS CloudTrail logging across all accounts to S3 buckets. Set a lifecycle policy to expire the data in each bucket after 7 years. c) Enable AWS CloudTrail logging across all accounts to Amazon Glacier. Set a lifecycle policy to expire the data after 7 years. d) Enable AWS CloudTrail logging across all accounts to a centralized Amazon S3 bucket. Set a lifecycle policy to move the data to Amazon Glacier after 90 days, and expire the data after 7 years. Answers: Question: 01: Answer: b, c Question: 02: Answer: b, c, f Question: 03: Answer: a, c, e Question: 04: Answer: c Question: 05: Answer: b, d Question: 06: Answer: c Question: 07: Answer: b Question: 08: Answer: a, c Question: 09: Answer: c Question: 10: Answer: d How to Register for Security Specialty Certification Exam? ● Visit site for Register Security Specialty Certification Exam. AWS Certified Database - Specialty certification questions and exam summary helps you to get focused on the exam. This guide also helps you to be on DBS-C01 exam track to get certified with good score in the final exam. AWS (DBS-C01) Certification Summary

AWS (DBS-C01) Database Specialty Certification Exam Syllabus 01. Workload-Specific Database Design - 26% Select appropriate database services for specific types of data and workloads. - Differentiate between ACID vs. BASE workloads - Explain appropriate uses of types of databases (e.g., relational, key-value, document, in-memory, graph, time series, ledger) - Identify use cases for persisted data vs. ephemeral data Determine strategies for disaster recovery and high availability. - Select Region and Availability Zone placement to optimize database performance - Determine implications of Regions and Availability Zones on disaster recovery/high availability strategies - Differentiate use cases for read replicas and Multi-AZ deployments Design database solutions for performance, compliance, and scalability. - Recommend serverless vs. instance-based database architecture - Evaluate requirements for scaling read replicas - Define database caching solutions - Evaluate the implications of partitioning, sharding, and indexing - Determine appropriate instance types and storage options - Determine auto-scaling capabilities for relational and NoSQL databases - Determine the implications of Amazon DynamoDB adaptive capacity - Determine data locality based on compliance requirements Compare the costs of database solutions. - Determine cost implications of Amazon DynamoDB capacity units, including on-demand vs. provisioned capacity - Determine costs associated with instance types and automatic scaling - Design for costs including high availability, backups, multi-Region, Multi-AZ, and storage type options - Compare data access costs 02. Deployment and Migration - 20% Automate database solution deployments. - Evaluate application requirements to determine components to deploy - Choose appropriate deployment tools and services (e.g., AWS CloudFormation, AWS CLI) Determine data preparation and migration strategies. - Determine the data migration method (e.g., snapshots, replication, restore) - Evaluate database migration tools and services (e.g., AWS DMS, native database tools) - Prepare data sources and targets - Determine schema conversion methods (e.g., AWS Schema Conversion Tool) - Determine heterogeneous vs. homogeneous migration strategies Execute and validate data migration. - Design and script data migration - Run data extraction and migration scripts - Verify the successful load of data 03. Management and Operations - 18% Determine maintenance tasks and processes. - Account for the AWS shared responsibility model for database services - Determine appropriate maintenance window strategies - Differentiate between major and minor engine upgrades Determine backup and restore strategies. - Identify the need for automatic and manual backups/snapshots - Differentiate backup and restore strategies (e.g., full backup, point-in-time, encrypting backups cross-Region) - Define retention policies - Correlate the backup and restore to recovery point objective (RPO) and recovery time objective (RTO) requirements Manage the operational environment of a database solution. - Orchestrate the refresh of lower environments - Implement configuration changes (e.g., in Amazon RDS option/parameter groups or Amazon DynamoDB indexing changes) - Automate operational tasks - Take action based on AWS Trusted Advisor reports 04. Monitoring and Troubleshooting - 18% Determine monitoring and alerting strategies. - Evaluate monitoring tools (e.g., Amazon CloudWatch, Amazon RDS Performance Insights, database native) - Determine appropriate parameters and thresholds for alert conditions - Use tools to notify users when thresholds are breached (e.g., Amazon SNS, Amazon SQS, Amazon CloudWatch dashboards) Troubleshoot and resolve common database issues. - Identify, evaluate, and respond to categories of failures (e.g., troubleshoot connectivity; instance, storage, and partitioning issues) - Automate responses when possible Optimize database performance. - Troubleshoot database performance issues - Identify appropriate AWS tools and services for database optimization - Evaluate the configuration, schema design, queries, and infrastructure to improve performance 05. Database Security - 18% Encrypt data at rest and in transit. - Encrypt data in relational and NoSQL databases - Apply SSL connectivity to databases - Implement key management (e.g., AWS KMS, AWS CloudHSM) Evaluate auditing solutions. - Determine auditing strategies for structural/schema changes (e.g., DDL) - Determine auditing strategies for data changes (e.g., DML) - Determine auditing strategies for data access (e.g., queries) - Determine auditing strategies for infrastructure changes (e.g., AWS CloudTrail) - Enable the export of database logs to Amazon CloudWatch Logs Determine access control and authentication mechanisms. - Recommend authentication controls for users and roles (e.g., IAM, native credentials, Active Directory) - Recommend authorization controls for users (e.g., policies) Recognize potential security vulnerabilities within database solutions. - Determine security group rules and NACLs for database access - Identify relevant VPC configurations (e.g., VPC endpoints, public vs. private subnets, demilitarized zone) - Determine appropriate storage methods for sensitive data AWS Database Specialty (DBS-C01) Certification Questions 01. A company undergoing a security audit has determined that its database administrators are presently sharing an administrative database user account for the company’s Amazon Aurora deployment. To support proper traceability, governance, and compliance, each database administration team member must start using individual, named accounts. Furthermore, long-term database user credentials should not be used. Which solution should a database specialist implement to meet these requirements? a) Use the AWS CLI to fetch the AWS IAM users and passwords for all team members. For each IAM user, create an Aurora user with the same password as the IAM user. b) Enable IAM database authentication on the Aurora cluster. Create a database user for each team member without a password. Attach an IAM policy to each administrator’s IAM user account that grants the connect privilege using their database user account. c) Create a database user for each team member. Share the new database user credentials with the team members. Have users change the password on the first login to the same password as their IAM user. d) Create an IAM role and associate an IAM policy that grants the connect privilege using the shared account. Configure a trust policy that allows the administrator’s IAM user account to assume the role. 02. An operations team in a large company wants to centrally manage resource provisioning for its development teams across multiple accounts. When a new AWS account is created, the developers require full privileges for a database environment that uses the same configuration, data schema, and source data as the company’s production Amazon RDS for MySQL DB instance. How can the operations team achieve this? a) Enable the source DB instance to be shared with the new account so the development team may take a snapshot. Create an AWS CloudFormation template to launch the new DB instance from the snapshot. b) Create an AWS CLI script to launch the approved DB instance configuration in the new account. Create an AWS DMS task to copy the data from the source DB instance to the new DB instance. c) Take a manual snapshot of the source DB instance and share the snapshot privately with the new account. Specify the snapshot ARN in an RDS resource in an AWS CloudFormation template and use StackSets to deploy to the new account. d) Create a DB instance read replica of the source DB instance. Share the read replica with the new AWS account. 03. A global company wants to run an application in several AWS Regions to support a global user base. The application will need a database that can support a high volume of low-latency reads and writes that is expected to vary over time. The data must be shared across all of the Regions to support dynamic company-wide reports. Which database meets these requirements? a) Use Amazon Aurora Serverless and configure endpoints in each Region. b) Use Amazon RDS for MySQL and deploy read replicas in an auto scaling group in each Region. c) Use Amazon DocumentDB (with MongoDB compatibility) and configure read replicas in an auto scaling group in each Region. d) Use Amazon DynamoDB global tables and configure DynamoDB auto scaling for the tables. 04. A company’s customer relationship management application uses an Amazon RDS for PostgreSQL Multi-AZ database. The database size is approximately 100 GB. A database specialist has been tasked with developing a cost-effective disaster recovery plan that will restore the database in a different Region within 2 hours. The restored database should not be missing more than 8 hours of transactions. What is the MOST cost-effective solution that meets the availability requirements? a) Create an RDS read replica in the second Region. For disaster recovery, promote the read replica to a standalone instance. b) Create an RDS read replica in the second Region using a smaller instance size. For disaster recovery, scale the read replica and promote it to a standalone instance. c) Schedule an AWS Lambda function to create an hourly snapshot of the DB instance and another Lambda function to copy the snapshot to the second Region. For disaster recovery, create a new RDS Multi-AZ DB instance from the last snapshot. d) Create a new RDS Multi-AZ DB instance in the second Region. Configure an AWS DMS task for ongoing replication. 05. A company’s ecommerce application stores order transactions in an Amazon RDS for MySQL database. The database has run out of available storage and the application is currently unable to take orders. Which action should a database specialist take to resolve the issue in the shortest amount of time? a) Add more storage space to the DB instance using the ModifyDBInstance action. b) Create a new DB instance with more storage space from the latest backup. c) Change the DB instance status from STORAGE_FULL to AVAILABLE. d) Configure a read replica with more storage space. 06. A database specialist is troubleshooting complaints from an application's users who are experiencing performance issues when saving data in an Amazon ElastiCache for Redis cluster with cluster mode disabled. The database specialist finds that the performance issues are occurring during the cluster's backup window. The cluster runs in a replication group containing three nodes. Memory on the nodes is fully utilized. Organizational policies prohibit the database specialist from changing the backup window time. How could the database specialist address the performance concern? (Select TWO.) a) Add an additional node to the cluster in the same Availability Zone as the primary. b) Configure the backup job to take a snapshot of a read replica. c) Increase the local instance storage size for the cluster nodes. d) Increase the reserved-memory-percent parameter value. e) Configure the backup process to flush the cache before taking the backup. 07. A media company is running a critical production application that uses Amazon RDS for PostgreSQL with Multi-AZ deployments. The database size is currently 25 TB. The IT director wants to migrate the database to Amazon Aurora PostgreSQL with minimal effort and minimal disruption to the business. What is the best migration strategy to meet these requirements? a) Use the AWS Schema Conversion Tool (AWS SCT) to copy the database schema from RDS for PostgreSQL to an Aurora PostgreSQL DB cluster. Create an AWS DMS task to copy the data. b) Create a script to continuously back up the RDS for PostgreSQL instance using pg_dump, and restore the backup to an Aurora PostgreSQL DB cluster using pg_restore. c) Create a read replica from the existing production RDS for PostgreSQL instance. Check that the replication lag is zero and then promote the read replica as a standalone Aurora PostgreSQL DB cluster. d) Create an Aurora Replica from the existing production RDS for PostgreSQL instance. Stop the writes on the master, check that the replication lag is zero, and then promote the Aurora Replica as a standalone Aurora PostgreSQL DB cluster. 08. A company has a highly available production 10 TB SQL Server relational database running on Amazon EC2. Users have recently been reporting performance and connectivity issues. A database specialist has been asked to configure a monitoring and alerting strategy that will provide metrics visibility and notifications to troubleshoot these issues. Which solution will meet these requirements? a) Configure AWS CloudTrail logs to monitor and detect signs of potential problems. Create an AWS Lambda function that is triggered when specific API calls are made and send notifications to an Amazon SNS topic. b) Install an Amazon Inspector agent on the DB instance. Configure the agent to stream server and database activity to Amazon CloudWatch Logs. Configure metric filters and alarms to send notifications to an Amazon SNS topic. c) Migrate the database to Amazon RDS for SQL Server and use Performance Insights to monitor and detect signs of potential problems. Create a scheduled AWS Lambda function that retrieves metrics from the Performance Insights API and send notifications to an Amazon SNS topic. d) Configure Amazon CloudWatch Application Insights for .NET and SQL Server to monitor and detect signs of potential problems. Configure CloudWatch Events to send notifications to an Amazon SNS topic. 09. A medical company is planning to migrate its on-premises PostgreSQL database, along with application and web servers, to AWS. Amazon RDS for PostgreSQL is being considered as the target database engine. Access to the database should be limited to application servers and a bastion host in a VPC. Which solution meets the security requirements? a) Launch the RDS for PostgreSQL database in a DB subnet group containing private subnets. Modify the pg_hba.conf file on the DB instance to allow connections from only the application servers and bastion host. b) Launch the RDS for PostgreSQL database in a DB subnet group containing public subnets. Create a new security group with inbound rules to allow connections from only the security groups of the application servers and bastion host. Attach the new security group to the DB instance. c) Launch the RDS for PostgreSQL database in a DB subnet group containing private subnets. Create a new security group with inbound rules to allow connections from only the security groups of the application servers and bastion host. Attach the new security group to the DB instance. d) Launch the RDS for PostgreSQL database in a DB subnet group containing private subnets. Create a NACL attached to the VPC and private subnets. Modify the inbound and outbound rules to allow connections to and from the application servers and bastion host. 10. A company's security department has mandated that their existing Amazon RDS for MySQL DB instance be encrypted at rest. What should a database specialist do to meet this requirement? a) Modify the database to enable encryption. Apply this setting immediately without waiting for the next scheduled maintenance window. b) Export the database to an Amazon S3 bucket with encryption enabled. Create a new database and import the export file. c) Create a snapshot of the database. Create an encrypted copy of the snapshot. Create a new database from the encrypted snapshot. d) Create a snapshot of the database. Restore the snapshot into a new database with encryption enabled. Answers: Question: 01: Answer: b Question: 02: Answer: c Question: 03: Answer: d Question: 04: Answer: c Question: 05: Answer: a Question: 06: Answer: b, d Question: 07: Answer: d Question: 08: Answer: d Question: 09: Answer: c Question: 10: Answer: c How to Register for Database Specialty Certification Exam? ● Visit site for Register Database Specialty Certification Exam. AWS Certified Advanced Networking - Specialty certification questions and exam summary helps you to get focused on the exam. This guide also helps you to be on ANS-C00 exam track to get certified with good score in the final exam. AWS (ANS-C00) Certification Summary